Model2h ago

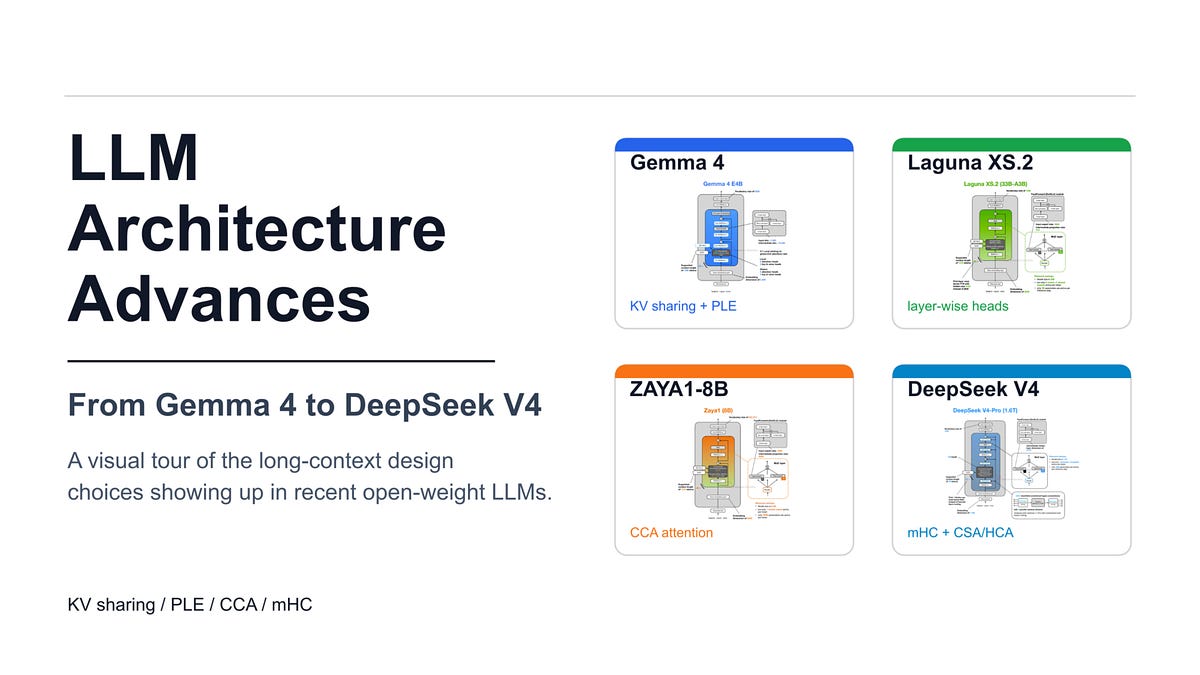

New LLM Architectures Slash Long-Context Costs

KV sharing and compressed attention now power models like Gemma 4 and DeepSeek V4. These techniques reduce the memory overhead required for massive context windows. By optimizing how tokens are stored and retrieved, these architectures lower inference costs. Practitioners can now deploy long-context applications with significantly less VRAM.