Research1d ago

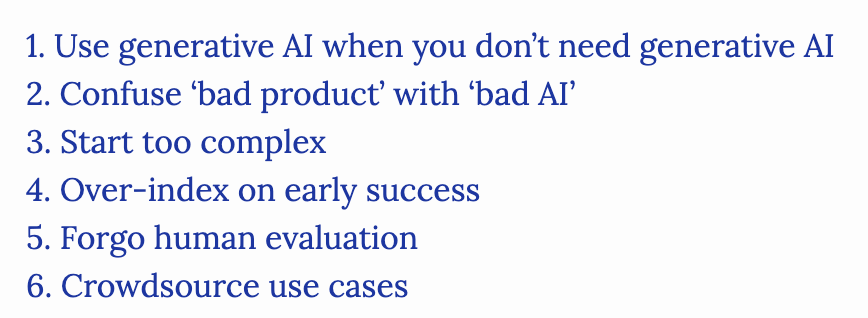

Common Pitfalls In Generative AI Development

Overusing foundation models for simple tasks remains a primary error for developers. Chip Huyen highlights the tendency to treat generative AI as a universal solution, citing an attempt to optimize energy consumption via LLMs. This misapplication wastes resources. Practitioners should prioritize deterministic logic over stochastic generation when precise, predictable outputs are required.