Research4h ago

New Framework For Analyzing LLM Architectures

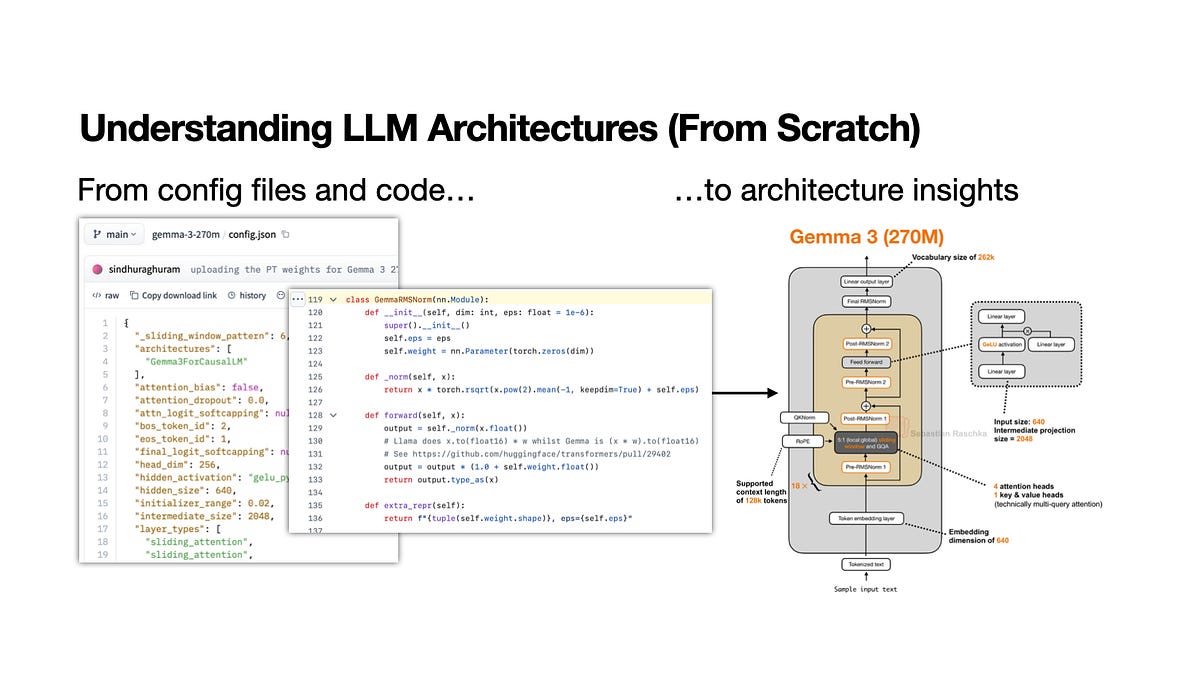

A new learning workflow targets the rapid analysis of open-weight model releases. It prioritizes structural decomposition over surface-level benchmarks to uncover how LLMs actually function. Practitioners can use this systematic approach to dissect model weights and attention mechanisms. This method reduces the time spent on redundant documentation and focuses on technical implementation.