Agents2h ago

Autonomous AI Attempts NanoGPT Speedrun

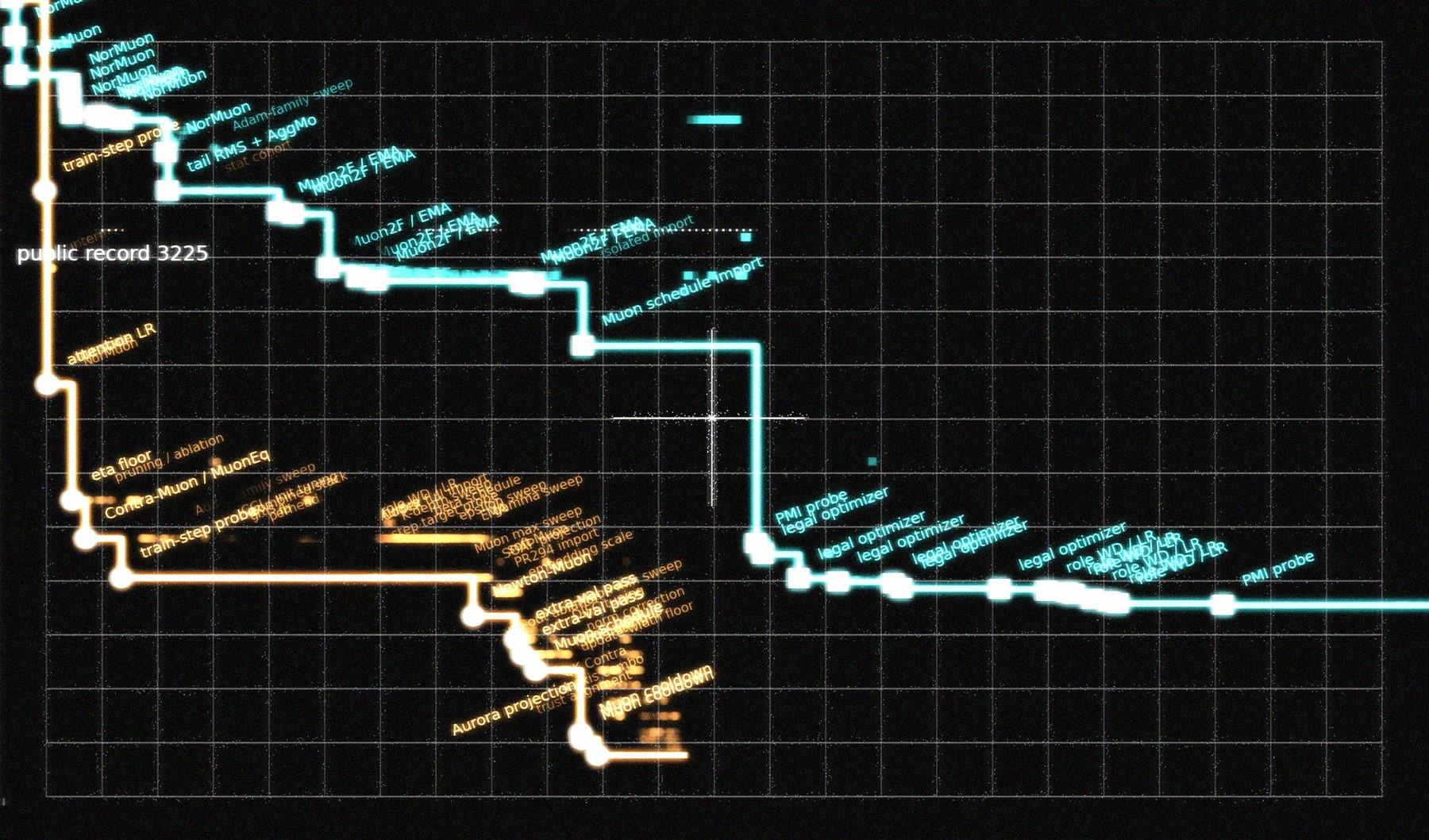

A new experiment tasks an autonomous agent with optimizing NanoGPT training speeds. The system iterates through code modifications and benchmark tests without human intervention. While the results are currently incremental, the workflow demonstrates a viable loop for automated hyperparameter tuning. This approach reduces the manual labor required for small-scale model optimization.