Research3h ago

New Architectures Slash Long-Context Costs

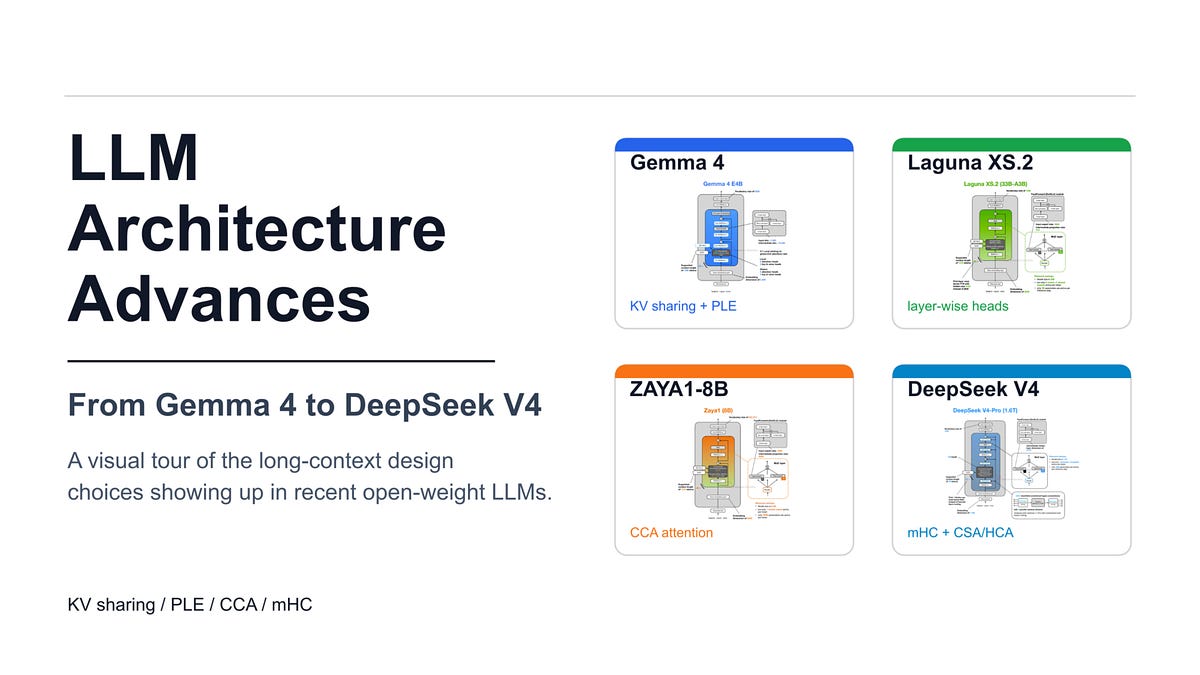

KV sharing and compressed attention mechanisms now define the latest open-weight models like Gemma 4 and DeepSeek V4. These techniques reduce memory overhead during inference. Developers can now handle larger contexts without linear increases in VRAM usage. This shift makes high-token window applications viable on consumer-grade hardware.