Research7h ago

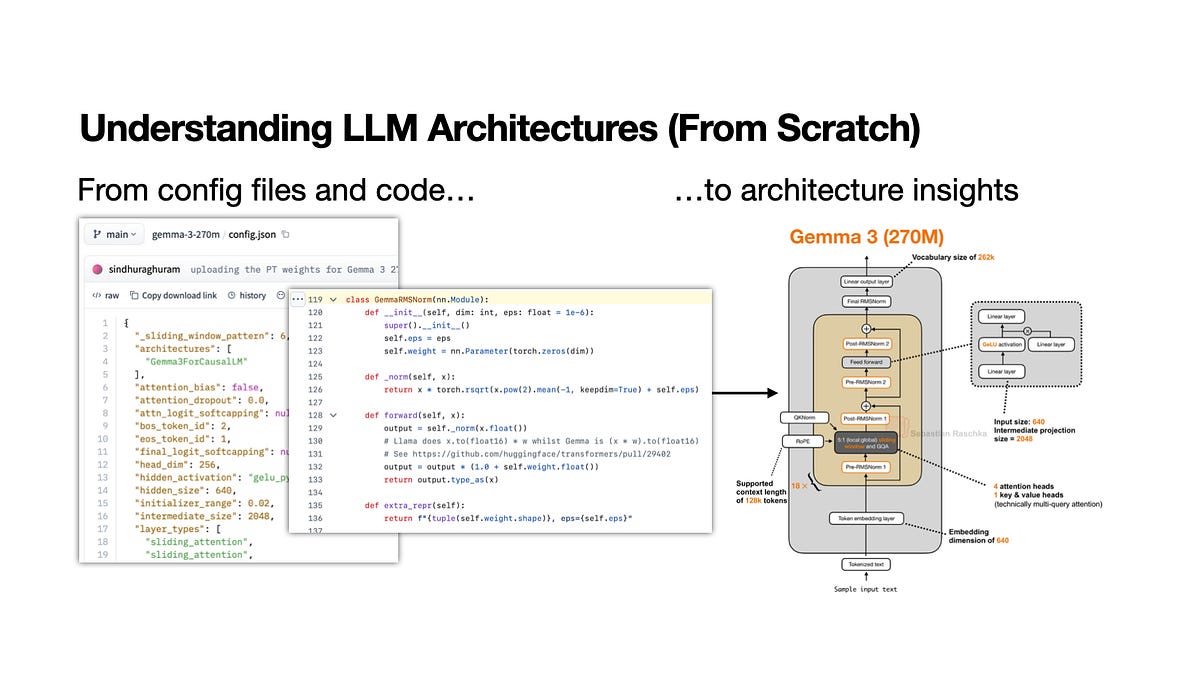

Framework For Analyzing Open-Weight Model Architectures

A new learning workflow provides a structured method for dissecting open-weight model releases. It emphasizes comparing attention mechanisms and tokenization strategies against established baselines. This approach helps developers quickly identify architectural deviations in new releases. Practitioners can use these steps to evaluate if a model's design offers genuine performance gains or mere incremental changes.