Research6h ago

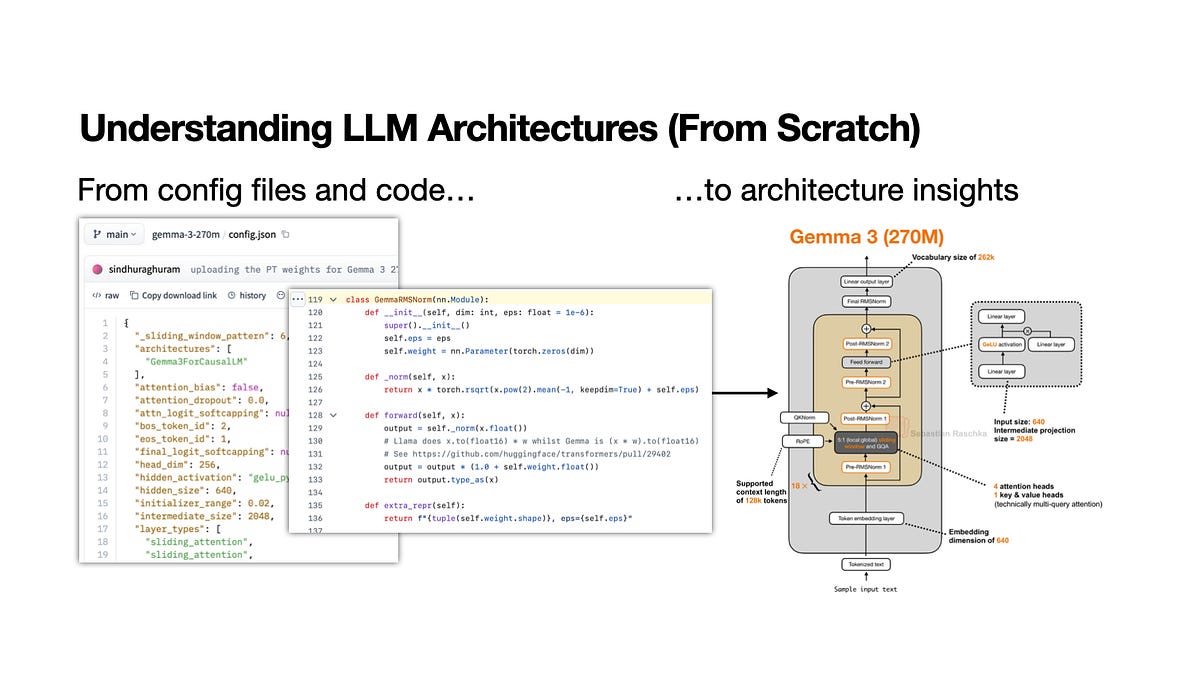

Guide To Analyzing Open-Weight Model Architectures

A new technical workflow details how to dissect open-weight model releases by mapping LLM architectures. The process focuses on comparing attention mechanisms and layer configurations across different versions. It provides a structured template for researchers to track performance shifts. This systematic approach helps practitioners quickly identify the specific structural changes driving model improvements.