Model2h ago

New LLMs Slash Long-Context Costs

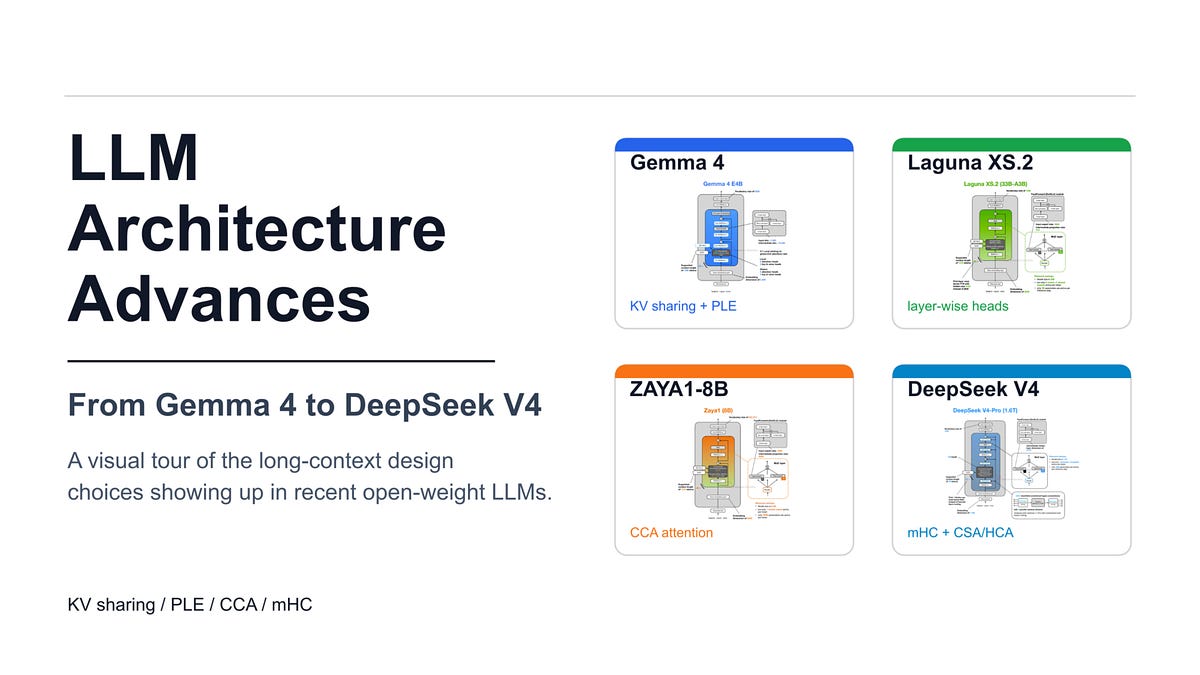

KV sharing and compressed attention now power Gemma 4 and DeepSeek V4 to reduce memory overhead. These architectural shifts allow models to handle massive contexts without linear increases in compute costs. Developers gain efficiency in long-document processing. This trend prioritizes inference speed over raw parameter counts to make open-weight models more viable for production.