Research12h ago

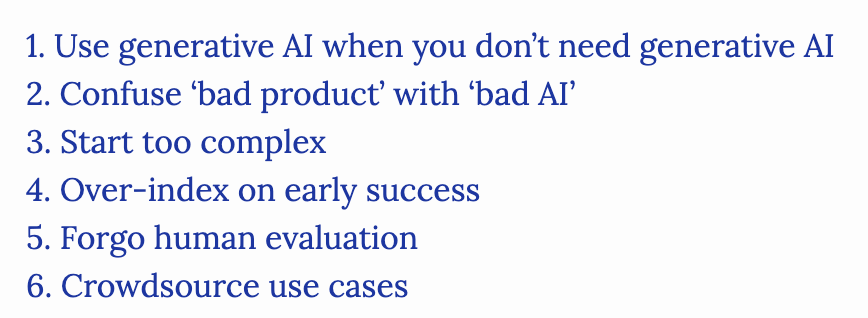

Common Pitfalls In Generative AI Development

Overusing foundation models for tasks that do not require generative capabilities remains a frequent mistake. Chip Huyen highlights how teams often treat AI as a universal tool, even for optimization problems. This tendency leads to inefficient architectures. Practitioners should prioritize deterministic logic over LLMs when the problem does not require natural language generation.